Introduction: In the realm of Python programming, encountering errors is inevitable. Whether you’re a beginner or an experienced developer, understanding…

add comment

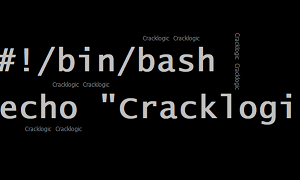

Given a csv file name as Items in CSV format with the below contents. Write a shell script to calculate…

add comment

In this post, you’ll grasp the fundamental syntax for opening a file and reading its contents, while learning how to…

add comment